|

8/12/2023 0 Comments Filebeat and elasticsearch Elasticsearch to generate the logs, but also to store them.

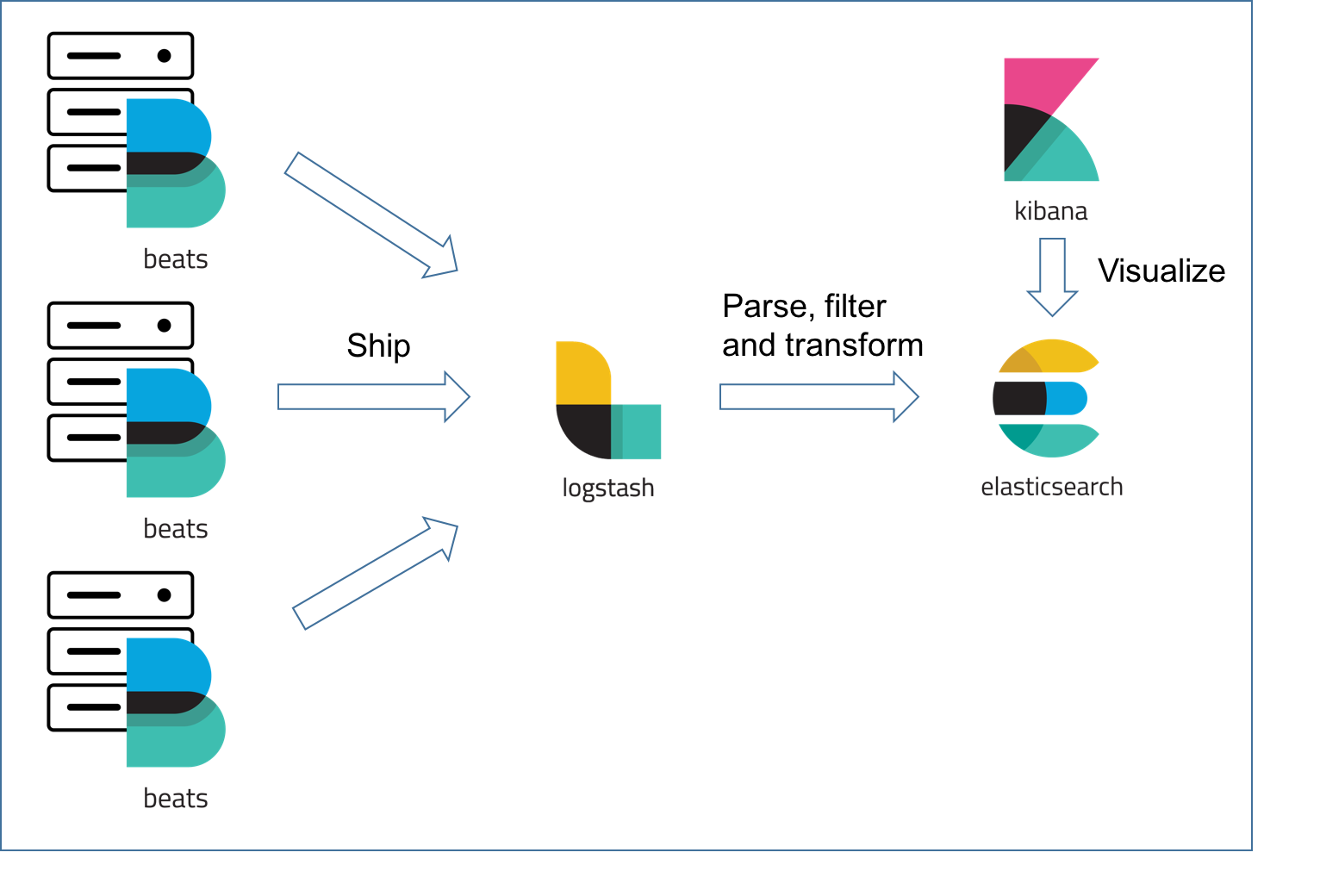

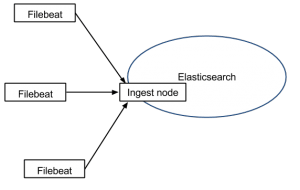

I’m sticking to the Elasticsearch module here since it can demo the scenario with just three components: It doesn’t (yet) have visualizations, dashboards, or Machine Learning jobs, but many other modules provide them out of the box.Īll you need to do is to enable the module with filebeat modules enable elasticsearch.Add an ingest pipeline to parse the various log files.Collect multiline logs as a single event.Set the default paths based on the operating system to the log files of Elasticsearch.For example, the Elasticsearch module adds the features: Installed as an agent on your servers, Filebeat monitors the log files or locations that you specify, collects log events, and forwards them įilebeat modules simplify the collection, parsing, and visualization of common log formats.Ĭurrently, there are 70 modules for web servers, databases, cloud services,… and the list grows with every release. Filebeat and Filebeat Modules #įilebeat is a lightweight shipper for forwarding and centralizing log data. If you’re only interested in the final solution, jump to Plan D. While writing another blog post, I realized that using Filebeat modules with Docker or Kubernetes is less evident than it should be. Adding Docker and Kubernetes to the Mix.Consult the Kibana documentation for information about searching the log entries.įlexNet Embedded 2021. For information on index patterns, see 6.Ĭlick Discover (in the grid menu) this should display license server log entries. When asked, set as the primary time field. Open Kibana with a browser by going to In Kibana's home page, click Connect to your Elasticsearch index.Ĭreate an index pattern of 'lls-logs-*'. Start filebeat running to start sending log entries to Logstash: Start the license server (if it is not already running). You can then view the entries in Kibana.īring up the Elastic Stack by executing this command from the demo directory: Perform the steps below to log entries to logstash. In your file, replace “acme” with your producer name, as found in producer-settings.xml.

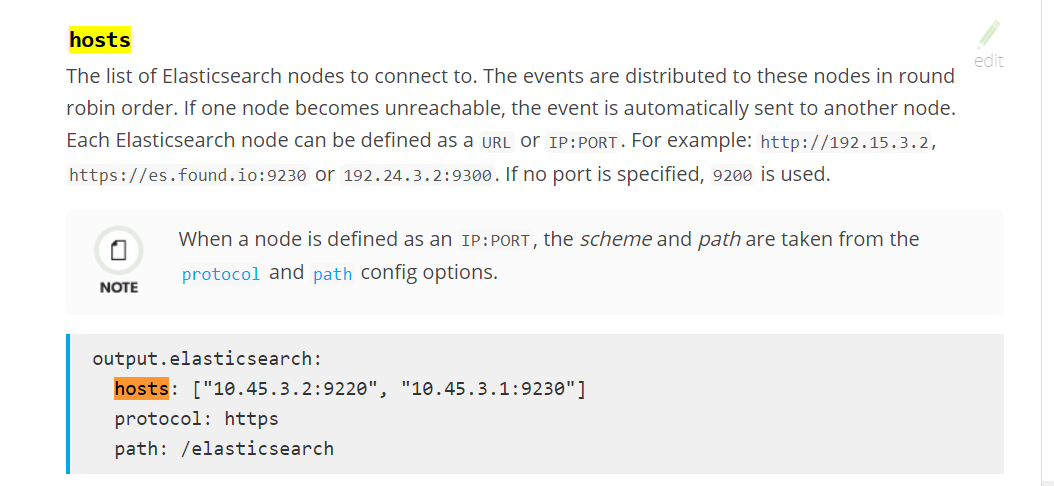

Note that this sample uses the producer name “acme”. The filebeat distribution contains an example filebeat.yml file. In this case the configuration can be found in /etc/filebeat/. Using the package manager will install it as a system service with systemd bindings. You can obtain a copy from On Linux you can also use your package manager (DEB, RPM) to install filebeat. This tool will send JSON log entries to the Elastic Stack. You can now build the Elastic Stack by executing this command in the demo directory :ĭownload and expand filebeat into the filebeat directory. # Use single node discovery in order to disable production mode and avoid bootstrap checksĪdd a Logstash configuration such as the following to the demo directory: # minimum_master_nodes need to be explicitly set when bound on a public IP RUN bin/elasticsearch-users useradd -r superuser -p esuser admin # FileRealm user account, useful for startup polling. elasticsearch.yml /usr/share/elasticsearch/config/elasticsearch.yml Image: /kibana/kibana:7.9.1Ĭreate the following files in the elastic search directory.ĬOPY. monitoring-data:/usr/share/elasticsearch/data Note that this demo uses the producer name “acme”. Use the sample below to create the docker-compose.yml file. Start the license server using the following command from the server directory :Ĭonfirm that log files with a. This causes logs to be written in JSON format to the default location of $HOME/flexnetls/$PUBLISHER/logs.

Add the following entry to the top section of local-configuration.yaml: To prepare the local license server for logging to a Docker installation: 1.Ĭopy the local license server files- flexnetls.jar, producer-settings.xml, and local-configuration.yaml-into the server directory.Ĭonfigure the server for logging in JSON format. This step assumes that Docker is installed. This demo uses the following directory structure:įollow the steps below to prepare the server to log to a Docker installation. This section is split into the following steps: 1. However, it is not recommended for production use, due to insufficient high availability, backup and indexing functionality. This configuration enables you to experiment with local license server logging to the Elastic Stack. Log data is persisted in a Docker volume called "monitoring-data". This section explains how to log to a Docker installation of the Elastic Stack (Elasticsearch, Logstash and Kibana), using filebeat to send log contents to the stack.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed